I’ll give it to you straight: how does it make sense to develop a voice-based game?

Microphones, in addition to physical input devices (keyboard, mouse, touch screen), are undeniably everywhere. Naturally, they are included in all mobile phones, but are also becoming more and more common in home computing. At the same time, speech recognition technology has also reached a level where it can handle a microphone as an increasingly complex input device. It’s only natural to ask: how can a player control such complex processes as games only via voice-based control?

A few years back, before the advent of voice-controlled AI assistants, there was a relatively small number of applications that would actively use signals from a microphone as their primary input. By today, however, these assistants have generated a technological competition that has made speech recognition applications not only more advanced but also much more accessible to developers through various speech APIs.

However, before we get into the development of voice-based games, it’s worth taking a closer look at the industry. What important considerations should be taken into account when developing such games, in which types of games does it make sense to use the technology at all, and, ultimately, what are the greatest obstacles standing in the way of the widespread use of voice-controlled games?

Speech Recognition vs. voice recognition

It is important to distinguish between these two concepts. Speech recognition deals with the recognition of patterns and connections present in human languages. For example, if the user pronounces the word “up,” the system recognizes the word based on the properties of the audio signal and is able to interpret it by comparing it to its language database. It can even decode complex sentences such as questions or instructions.

In contrast, voice recognition merely means the identification and quantification of sound properties that also determine words. For example, the perception of volume, pitch, or the characteristics of sounds.

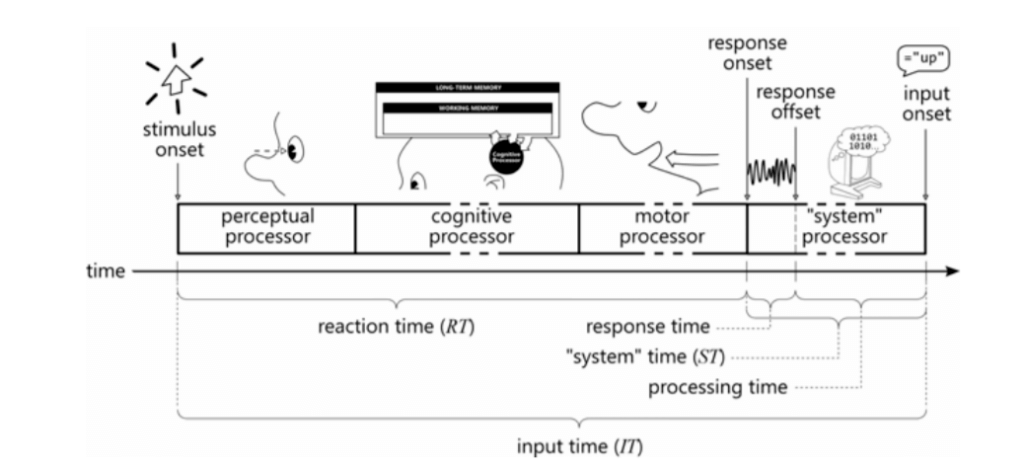

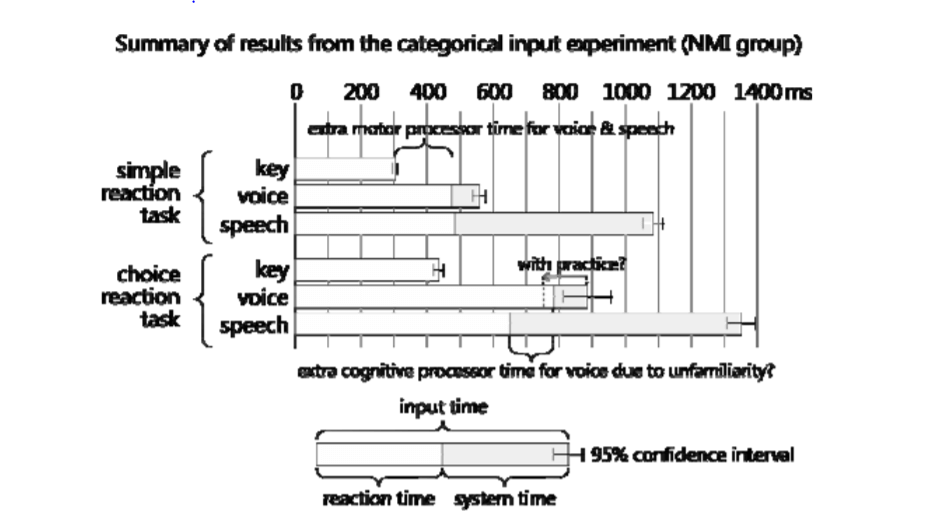

All voice-controlled solutions can be divided into these two major groups. It is worth highlighting an important difference between the two, and that is computational time. As described by Susumu Harada et al. in his study, the great advantage of physical input over all voice control is that computers process the received signals almost immediately.

The processing time of speech recognition is significantly longer than this. The spoken words and sentences have to be recorded first, then the specific words are processed and interpreted, even the linguistic syntaxes have to be decoded.

In comparison, the computation time of voice control is roughly located between the physical input and the speech recognition. The disadvantage of voice control is that in most cases the user has to give commands that are unusual compared to other control principles and require learning – so the user’s response time could be slower initially.

In addition, the processing of voice-based signals for the time being requires such a complex calculation that it is faster to perform it not in the device itself, but in a remote data center. So for now, how fast your device can process audio signals also depends on the speed of your internet connection.

How to use voice control in games?

It is important to emphasize that for the vast majority of video games available today; response time and processing time are particularly important. After all, these games are mostly based on challenges that require the user to enter the input in the given (usually very short) time. For example, in a car racing game we have to react to corners before we hit the wall, and in a shooting game, we have to pull the trigger in time, or take cover from opponents before we take damage. Until voice-based control cannot exceed the speed of physical control, it can only be used in special cases where timing is not an important factor.

It is the easiest to implement speech recognition in case of trivia or quiz-based games, or basically text-based games, such as the role-playing game called Zork. Here, in general, not only the control, but also the visual representation can be completely sound-based – naturally, this is much easier to do in the case of a trivia than in a more complex role-playing game.

https://www.youtube.com/watch?v=tZW9_h-mp6U

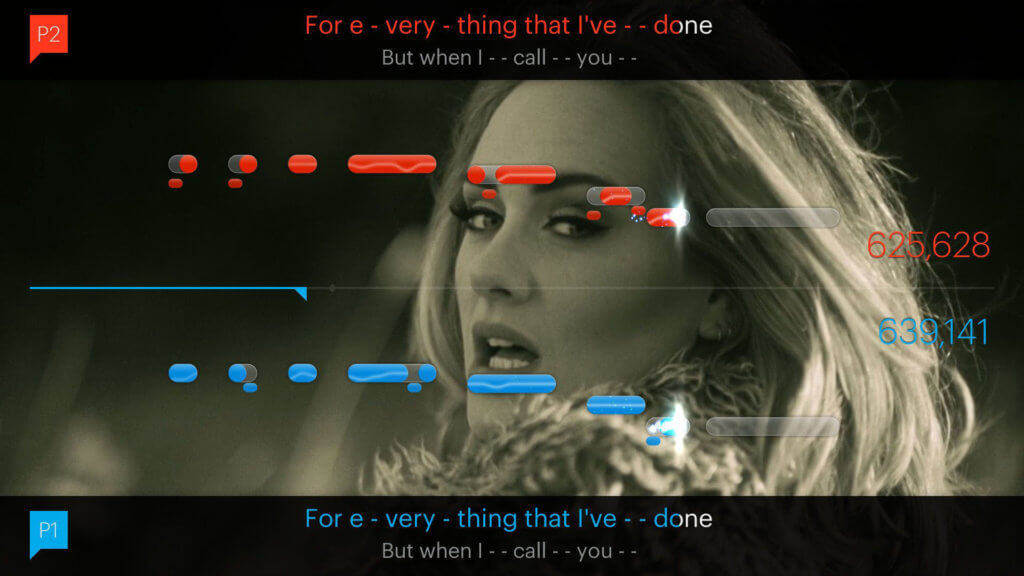

The most obvious use of voice recognition is in applications related to ancient human expressions, singing, and music. For example, there are plenty of karaoke-based games that compare a user’s sense of rhythm ability to hit certain pitches (in plain English: singing skills) with the characteristics of the songs, and assign scores based on that. These games usually denote the rhythm and pitch of the corresponding sounds in a coordinate system similar to some sheet music.

The Harada study cited earlier mentions the Vocal Joystick Engine developed at the University of Washington. The essence of this is that any input that records continuous movement, such as a mouse or a joystick, can be controlled by mere sounds. Vowel types are assigned directions, while pitch and volume are assigned speeds. This makes it possible to control any complex mouse-based game with just a microphone, but it is inherently much slower than traditional control modes and has a much steeper learning curve.

As the video above shows, the Vocal Joystick Engine wasn’t created primarily to truly integrate voice control into the gaming industry. Rather, its primary purpose is to make games accessible to those who are unable to operate traditional input devices due to some disability.

It is also possible to integrate voice control in gaming by using speech recognition assistants within the games themselves. A Hungarian startup called FridAI has developed a similar solution: in essence players can verbally ask for help when they get stuck. The assistant searches for available databases (official and player-operated wiki resources) and game-related forums to help you solve the challenge.

Based on a recent patent application, Sony is working on a similar solution. However, their system does not rely on crowdsourcing to overcome obstacles, rather directs the user towards microtransactions, i.e. help that can be basically bought for money.

The level of integration could even be deeper if these voice-based assistants were actively built into the game. There are plenty of games where players can enter a larger world and interact with the inhabitants. For now, such conversation engines work relatively simply: you can select sentences of your own character from either a list or a circular dial, and the conversation moves forward in a branch diagram based on their section.

By replacing these conversation engines with speech b, we could create real dialogues where we could not choose from pre-written sentences, but engage in immersive role-playing. So far, none of the game developers are sophisticated enough to build such an assistant system for all actors, but this clearly seems to be the next step in the evolution of games.

Obstacles and challenges for voice-based games

For the time being, the main obstacle to voice – and speech-controlled games is that the type of input method is slower than other available alternatives. However, processing time is improving day by day, and it is completely plausible that a smartphone may soon be able to process audio signals. Thus, in time, as these solutions become more common, it is likely that the human response time will also shorten as we become accustomed to this type of control.

It is worth mentioning that voice-based assistants are only really effective in a few languages – mostly in English. However, as these enhancements become available in other languages, more and more users will have the opportunity to try out voice-based games.

An undeniable advantage of physical input (and a glaring disadvantage of voice control) is that their use is already widespread at the skill level. Children born today often learn to handle the tablet before saying their first word. This further hinders the chances of voice control in a speed battle with physical inputs.

Another major obstacle to the spread of voice-based games is the fact that video gaming is basically considered a solitary, quiet activity. For example, no one wants to play a voice-based game on their lunch break or during commute, even though, according to mobile game statistics most users find time to play during these times.

The technology is in danger of getting bogged down at the “gimmick” level for an extended period of time. For the time being, it is really difficult to find a truly relevant, irreplaceable option for voice-based games, apart from the trivia and singing-based solutions mentioned above. What, on the other hand, would reel players in, such as an in-game chat engine based on real-time assistants, is still a technologically too complex task for studios. In the meantime, however, there are areas where voice-based control can really help, and where developers can further improve the technology.

How and why is it worth developing games based on voice-control?

The most obvious answer is accessibility. Unfortunately, there are many individuals who are unable to control traditional controllers due to various disabilities in the primary (young) target audience of video games. Developers can create accessibility solutions that make certain features of the game voice–controlled. During these experiments some practices could emerge demonstrating that voice-based control also has a place in complex games.

There are plenty of situations where the user’s hand is already tied up so to say by some other, physical controller. Voice-based communication complements most of today’s games: in these instances, we do not control a programmed aspect in the game, but rather group dynamics and strategy development that do not require development. For example, we can have a multiplayer discussion on how to get around the building from the right side in the next round and attack the other team from that direction – in some ways, this could be classified as voice-based game control.

Another prime example is driving. The game Drivetime is a 100% voice-controlled quiz game designed specifically for drivers. You can play it safely while your hands are on the wheel and your eyes are on the road ahead.

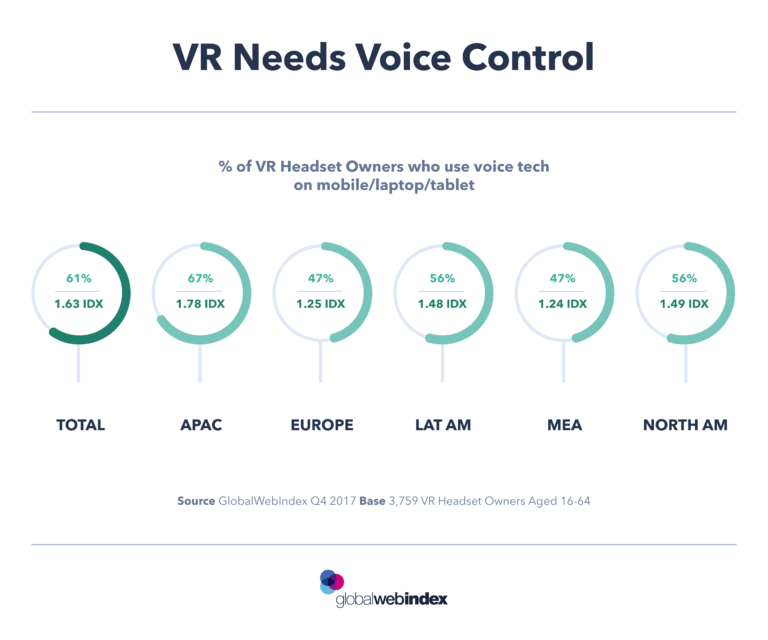

Although still an evolving field, voice-based control can also make great strides in virtual reality. The primary reason for this is that current VR users are still considered early adopters – so they could be persuaded to try new modes of control. In addition, well-designed voice control can help make VR-games even more immersive, which is also one of the goals of the whole technology.

The territory laying ahead of voice-based games is full of untapped opportunities, but we should not ignore or dismiss the obstacles ahead. It is almost certain that processing time will be shortened and more complex systems will be developed, but there’s a long road ahead of us. In the meantime, developers need to actively seek the opportunity to take advantage of the strengths of the technology in order to achieve real breakthrough and reach out to the wider gaming community.

recommended

articles

Find out more about the topic

Share your opinion with us